Briefly: Russia is as soon as once more operating on-line campaigns in an effort to control the presidential elections in the United States, albeit at a slower date than what we noticed throughout earlier elections. Microsoft writes that the rustic is most commonly looking to undermine backup for Ukraine and NATO amongst US audiences.

Russia’s US election affect marketing campaign has picked up the date over the terminating two months, writes Microsoft, however the much less contested number one season method it hasn’t been as intense as in 2016 and 2020.

Russia’s number one focal point in its marketing campaign is spreading backup for its conflict in Ukraine thru disinformation, lowering backup for NATO, and inflicting infighting inside the United States, the use of conventional and social media and a mixture of covert and overt campaigns.

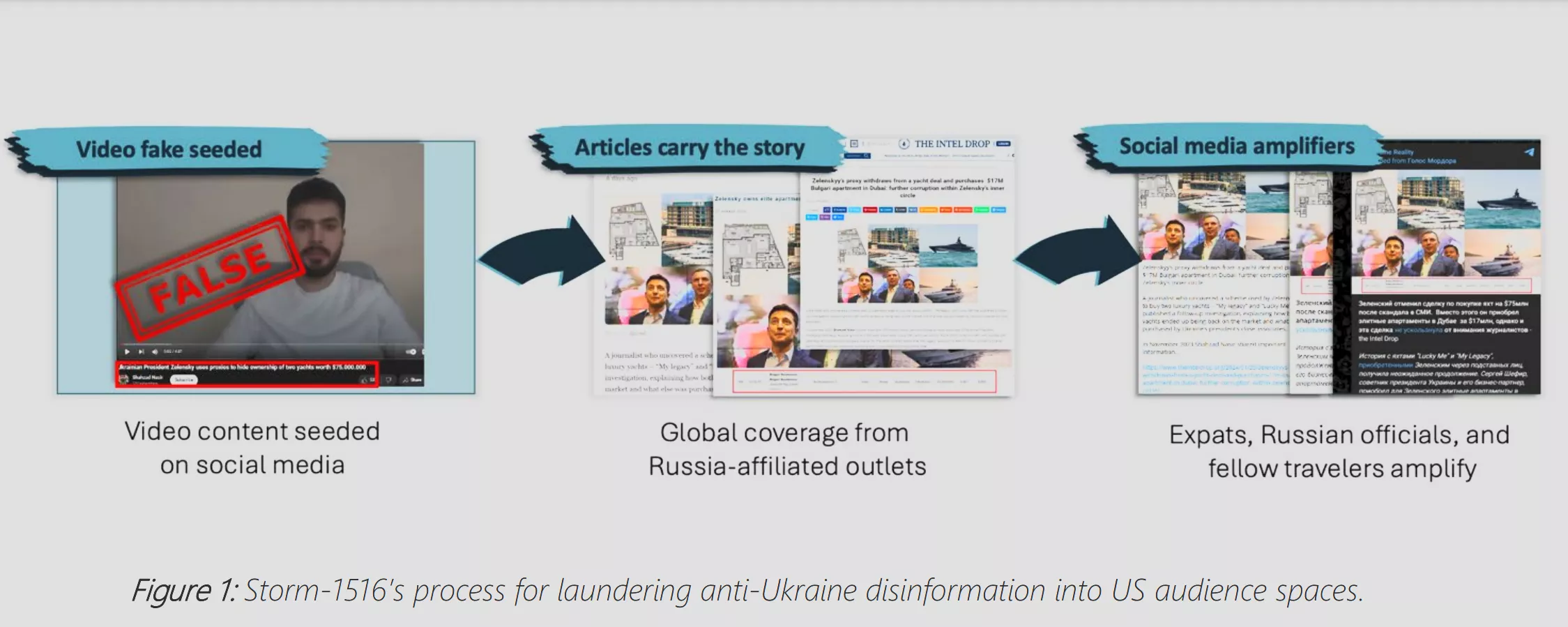

Microsoft writes that Russian-affiliated team Typhoon-1516 pushes an anti-Ukraine message via making a video of any individual presenting as a whistleblower or citizen journalist spreading a Russian-positive narrative concerning the conflict. The video is upcoming coated via a apparently unaffiliated international community of covertly controlled web pages, together with DC Weekly and The Intel Let fall, prior to being amplified via Russian expats, officers, and fellow travellers. US audiences upcoming repeat and repost the disinformation, most probably blind to its supply.

Some other team highlighted via Microsoft is Megastar Snow fall, aka Chilly River. It makes a speciality of Western assume tanks, and date it nonetheless has negative connection to the 2024 elections, its focal point on US political figures is also the primary in a order of hacking campaigns designed to power Kremlin-favored results heading into November.

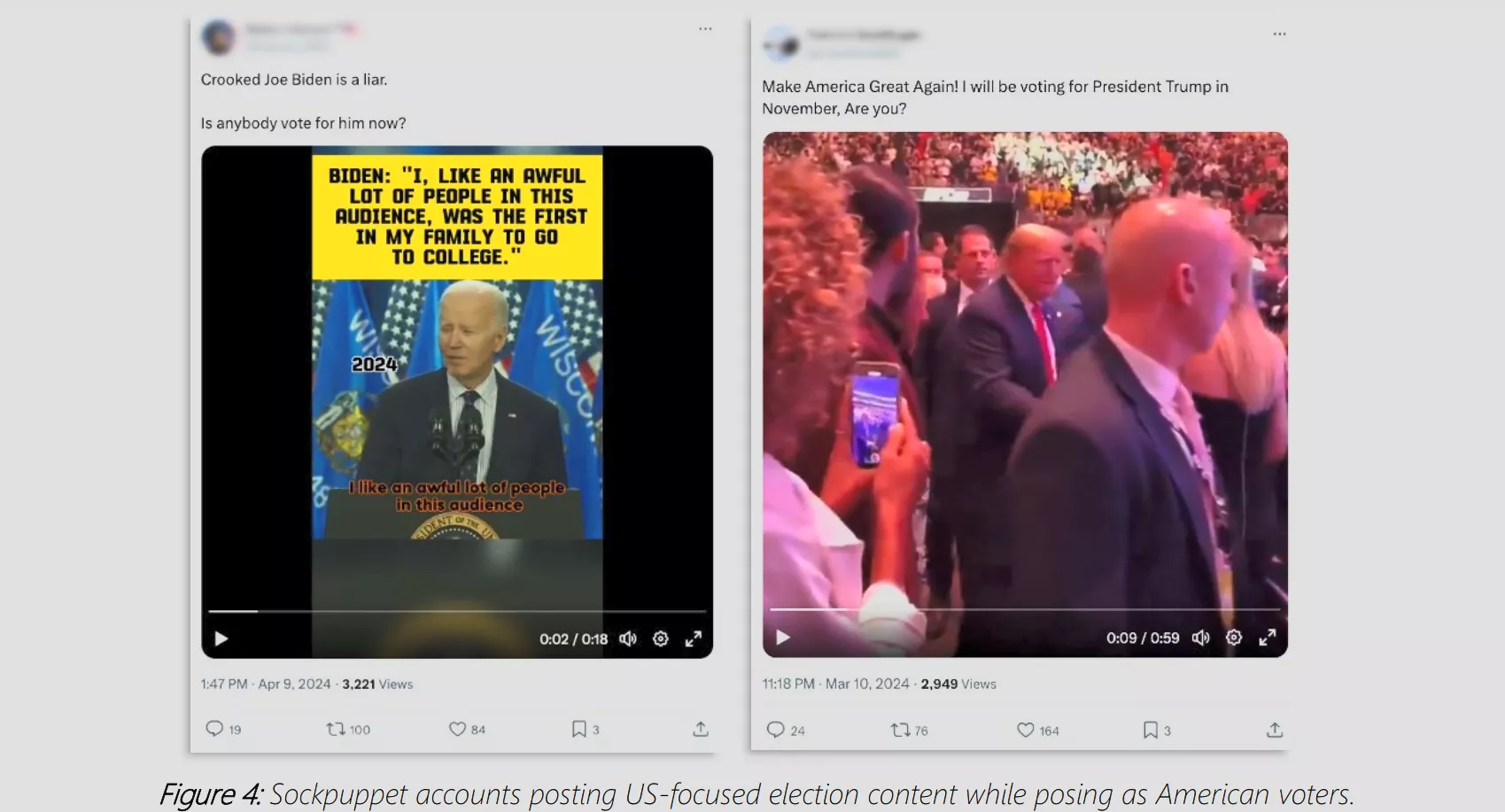

There were a quantity of issues over international adversaries the use of complicated AI to persuade US elections. Microsoft stated that the popular virtue of deepfaked movies has up to now no longer been seen, however AI-enhanced and AI audio faux content material is more likely to have extra luck.

Microsoft writes that AI-enhanced content material is extra influential than absolutely AI-generated content material, AI audio extra impactful than AI video, and faux content material purporting to come back from a non-public environment reminiscent of a telephone name is more practical than faux content material from a population environment. The corporate provides that impersonations of lesser-known society paintings higher than impersonations of important people reminiscent of international leaders.

We’ve already clear examples of fauxed audio being worn to persuade society. A 39-second robocall went out to citizens in Fresh Hampshire in January telling them to not vote within the Democratic number one election, however to “save their votes” for the November presidential election. The resonance handing out this recommendation used to be an AI-generated style that sounded virtually precisely like Joe Biden. It ended in the FCC making AI-generated robocalls unlawful and Biden calling for a cancel on AI resonance impersonations.

It’s no longer simply Russia having a look to disrupt and affect the United States elections the use of AI. Microsoft warned previous this date that Chinese language campaigns have persisted to refine AI-generated or AI-enhanced content material, developing movies, memes, and audio, amongst others, to assemble social media posts specializing in politically divisive subjects.

In February, 20 primary firms, together with Amazon, Google, Meta, and X, signed an contract pledging to root out deepfake and generative AI content material designed to persuade or intervene with electorate’ democratic proper to vote.